In a world where technology is constantly evolving, one of the most fascinating advancements has been the rise of generative AI. This groundbreaking technology has revolutionized the way we create content and interact with computers. But how exactly does generative AI work, and what implications does it have for the future of technology? In this blogpost, we delve into the inner workings of generative AI and explore its far-reaching impact on our digital landscape.

What is Generative AI?

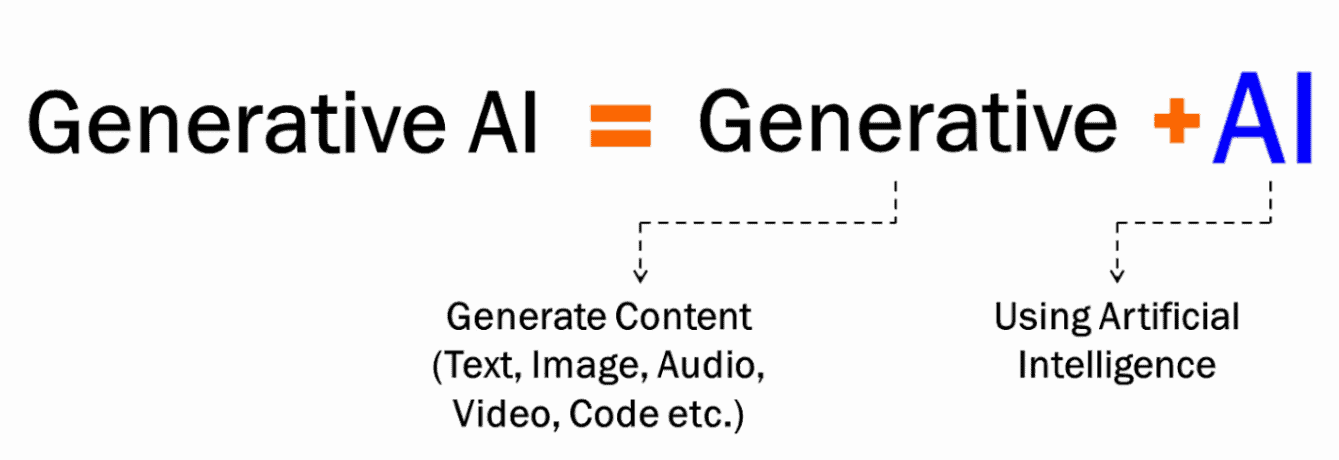

Generative Artificial Intelligence (Generative AI) is a rapidly growing field of study within the broader domain of artificial intelligence. It involves the development and application of algorithms, systems, and models that create or generate new content, ideas, or designs without direct human involvement. This cutting-edge technology has made significant advancements in recent years, leading to groundbreaking applications in a variety of industries.

At its core, generative AI uses machine learning techniques to enable computers to create something original based on input data and rules provided by developers. Unlike traditional AI methods that simply respond to specific input with predetermined output, generative AI produces outputs that go beyond what was explicitly programmed. In other words, it has the ability to think creatively and independently.

History and Emergence of Generative AI

Generative Artificial Intelligence (AI) has evolved significantly from its origins in the 1950s when Alan Turing introduced the concept of “Machine Learning.” Initially, AI development focused on rule-based systems that could perform specific tasks but were limited by the need for extensive human-crafted rules and data. The shift towards more adaptable AI began with the introduction of neural networks, which allowed machines to learn from data and generate new content based on identified patterns and structures.

Key advancements in generative AI include the development of Variational Autoencoders (VAEs) in 2013 and Generative Adversarial Networks (GANs) in 2014 by researchers at Google Brain. VAEs use deep learning to generate images efficiently, while GANs involve two neural networks working together to create and evaluate realistic images. These breakthroughs have enabled machines to produce highly realistic images, videos, and audio, capturing significant interest from researchers and businesses. As generative AI continues to advance, it is poised to drive future innovations across various industries.

Understanding the difference between generative AI and traditional ML

While both generative AI and traditional machine learning (ML) are branches of artificial intelligence, they differ significantly in their goals and functionalities.

Generative AI: Focuses on creating entirely new data that resembles the data it’s trained on. This includes generating images, text, music, code, or even 3D models.

Traditional ML: Analyzes existing data to make predictions, classifications, or decisions based on patterns and relationships within the data. It doesn’t create new data but rather uses existing data to understand and make inferences.

The Technology Behind Generative AI

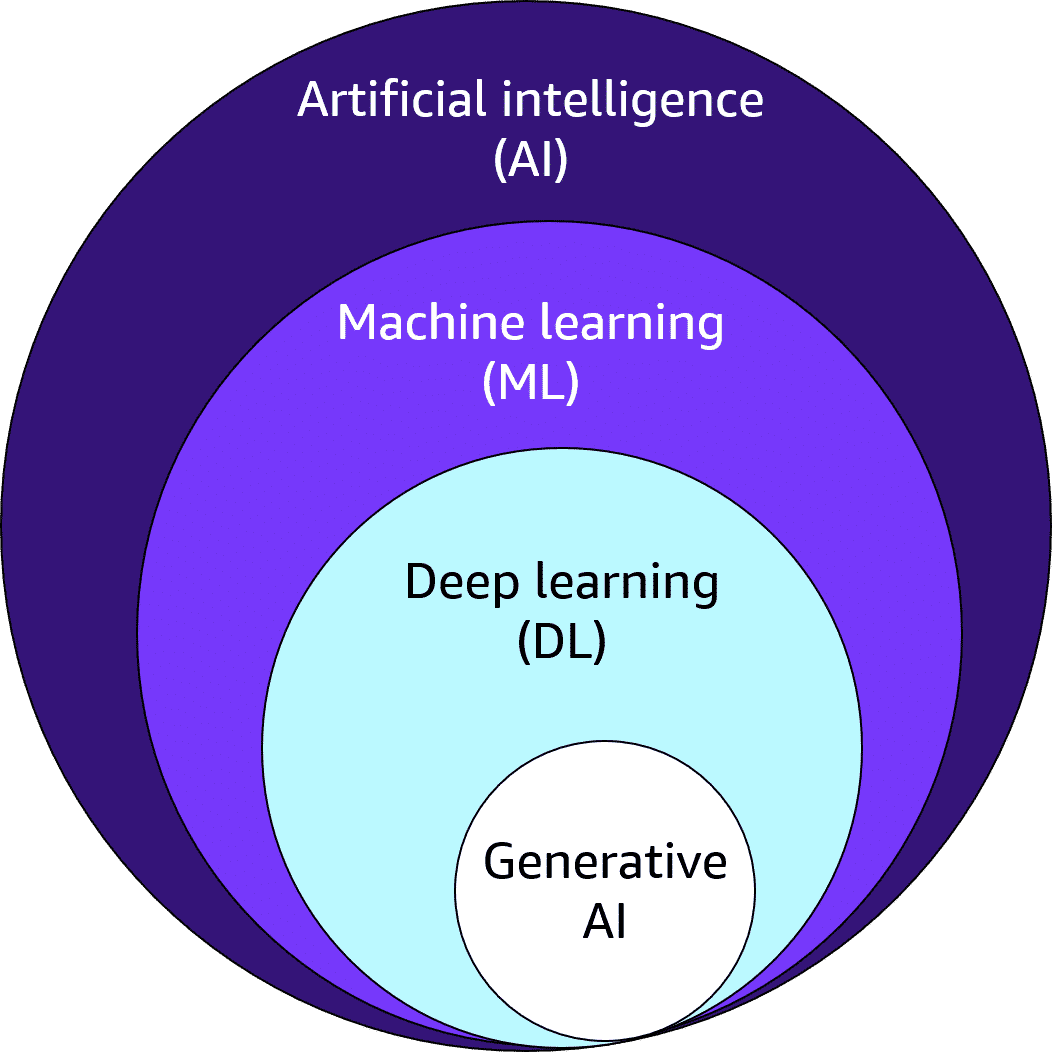

The technology behind generative AI is rooted in several advanced concepts within the broader fields of artificial intelligence (AI), machine learning (ML), and deep learning (DL).

Here’s an overview of the key technologies and methodologies that power generative AI:

1. Artificial Intelligence (AI)

AI encompasses the creation of systems capable of performing tasks that typically require human intelligence. This includes tasks such as reasoning, learning, and problem-solving.

2. Machine Learning (ML)

ML is a subset of AI that involves training algorithms to recognize patterns and make decisions based on data. It enables systems to learn and improve from experience without being explicitly programmed for each task.

3. Deep Learning (DL)

DL, a subset of ML, uses neural networks with many layers to process and learn from vast amounts of data. These deep neural networks can automatically learn hierarchical representations of data, making them particularly effective for complex tasks like image and speech recognition.

Deep Learning Models:

- At the core of most generative AI systems are deep learning models, particularly Generative Adversarial Networks (GANs) and Variational Autoencoders (VAEs).

- GANs: These models involve two competing neural networks: a generator and a discriminator. The generator tries to create realistic data, while the discriminator attempts to distinguish the generated data from real data. This adversarial training process helps the generator improve its ability to produce increasingly realistic and convincing outputs.

- VAEs: These models encode the input data into a compressed representation and then learn to decode it back into a new, similar data point. This allows VAEs to capture the essential features and relationships within the data and generate variations or new examples that stay true to the original style.

How Does Generative AI Work?

To begin with, the generator network is fed random noise, which it then uses to generate new images or texts. These generated outputs are then passed on to the discriminator network, which evaluates them based on how closely they resemble real data. If the output is deemed realistic enough by the discriminator, it is labeled as “real” and used to further train both networks. If not, it is discarded and both networks learn from their mistake.

Through this back-and-forth process of generating and evaluating data, both networks gradually improve their ability to create realistic outputs that can easily fool humans into believing they are real. This results in an ever-evolving model that keeps getting better at generating authentic outputs with each iteration.

One of the key factors driving the success of generative AI algorithms is their ability to learn from unlabeled data. Unlike other AI approaches where large amounts of accurately labeled data are needed for training purposes, GANs only require unlabeled data samples like images or text for training; hence reducing human intervention significantly.

The potential impact of this technology on various industries cannot be understated. From art and design to healthcare and finance, GANs have been able to generate images, videos and even written content that closely resembles what we see in real life. With further advancements in this field, we may soon see machines replacing human artists and writers!

However, there are also concerns about unethical use cases such as deepfake videos and fake news articles being created and circulated. This highlights the need for responsible development and usage of generative AI technologies.

Generative AI has opened up a whole new realm of possibilities in the world of technology. Its ability to create realistic outputs from unlabeled data is revolutionizing various industries while also raising ethical concerns. With further advancements in this field, it will be exciting to see how it continues to evolve and shape our future.

Use cases for generative AI

Generative AI has a wide range of potential applications across diverse industries. Here are some of the most prominent use cases:

1. Content Creation:

- Image and video generation: Create realistic images, videos, and animations based on text descriptions or prompts. This can be used for marketing materials, product design, visual effects in movies and games, and even creating art.

- Music generation: Compose music in different styles and genres, generate sound effects, or even create personalized soundtracks for videos or games.

- Text generation: Generate different creative text formats like poems, code, scripts, musical pieces, email, letters, etc. This can be used for marketing copy, writing blog posts, generating product descriptions, or even writing fiction.

2. Customer Experience:

- Chatbots and virtual assistants: Develop AI-powered chatbots and virtual assistants that can engage in natural conversations with customers, answer questions, and provide support.

- Personalized recommendations: Generate personalized product recommendations, content suggestions, and marketing materials based on individual customer preferences and behavior.

3. Product Development:

- Design and prototyping: Generate new product design concepts and prototypes based on user needs and specifications. This can accelerate the design process and lead to more innovative products.

- Code generation: Assist developers with writing code by suggesting code snippets, completing code based on partial input, and even generating entire programs.

4. Other Applications:

- Drug discovery: AI can generate new potential molecular structures for drugs, accelerating the process of drug discovery and reducing costs.

- Fraud detection: Identify and prevent fraudulent activities by analyzing large amounts of data and detecting unusual patterns.

- Scientific research: Generate new hypotheses, design experiments, and analyze complex data sets to accelerate scientific discovery.

These are just a few examples, and the potential applications of generative AI are constantly evolving. As the technology continues to develop, we can expect to see even more innovative and impactful use cases emerge.

Benefits of generative AI?

Generative AI holds significant potential for transforming various aspects of business operations. It can simplify the interpretation and comprehension of existing content and autonomously generate new content. Developers are investigating how generative AI can enhance current workflows and are considering restructuring workflows to fully leverage this technology. Some of the potential advantages of employing generative AI include:

- Automating the creation of written content.

- Reducing the effort required to respond to emails.

- Enhancing responses to specific technical queries.

- Generating realistic depictions of individuals.

- Condensing complex information into a clear narrative.

- Streamlining the process of creating content in a specific style.

However, early implementations of generative AI also highlight several limitations. These challenges often stem from the specific methods used for different applications. For example, while a summary of a complex topic may be more readable than a detailed explanation with supporting sources, this readability often comes at the cost of transparency regarding the information’s origins.

Limitations of generative AI

- Difficulty in identifying the sources of content.

- Challenges in assessing the bias of original sources.

- The creation of realistic-sounding content that may obscure inaccuracies.

- Complexity in adjusting the AI to new circumstances.

- Potential to overlook bias, prejudice, and harmful content.

These limitations underscore the need for careful implementation and usage of generative AI to ensure the reliability and accuracy of its outputs.

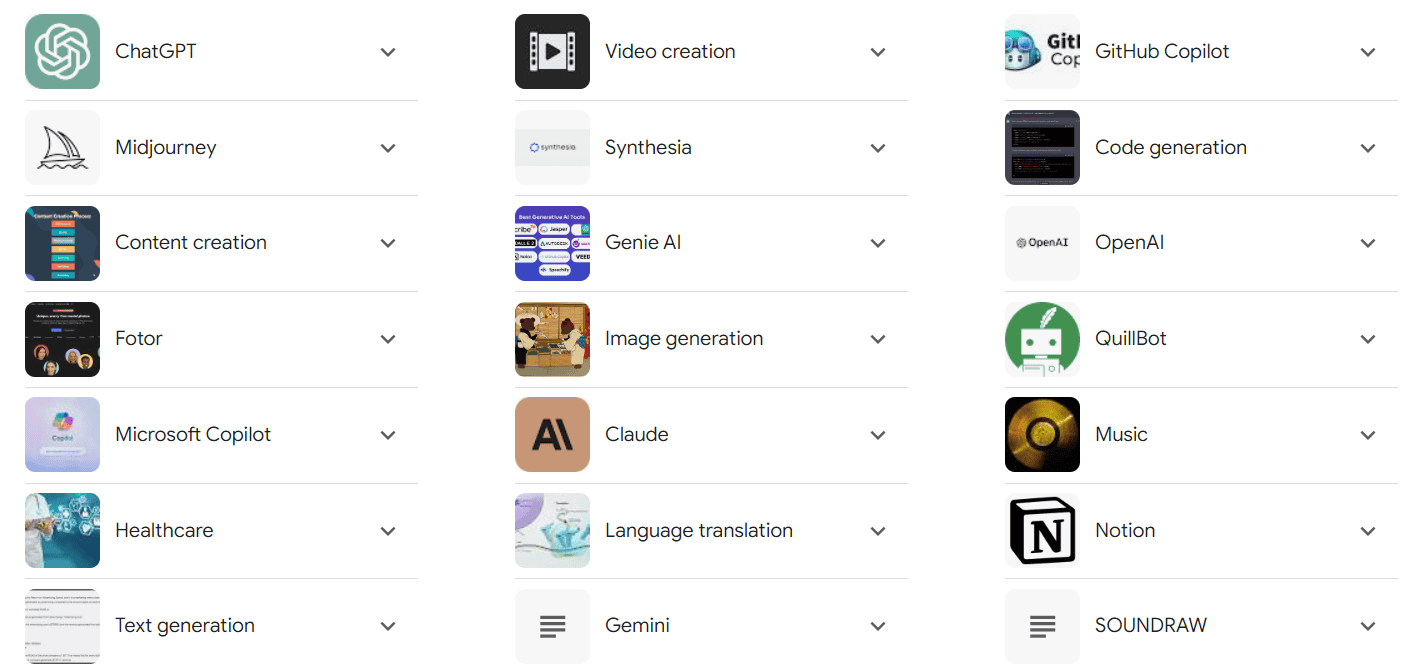

Some examples of generative AI tools

There are a wide range of generative AI tools available, each with its own strengths and specializations.

Here are some popular examples:

1. Text Generation:

- OpenAI’s GPT-3: A powerful language model capable of generating different creative text formats like poems, code, scripts, musical pieces, email, letters, etc.

- Jasper: A tool focused on content creation, helping users write blog posts, social media content, marketing copy, and more.

- Writesonic: Another content creation platform that helps generate different types of written content, including product descriptions, website copy, and email marketing materials.

2. Image Generation:

- DALL-E 2: OpenAI’s latest image generation model, capable of creating realistic and creative images based on text descriptions.

- Midjourney: Another popular image generation tool known for its artistic and dreamlike outputs.

- NightCafe Studio: A user-friendly platform for generating images and exploring different artistic styles.

3. Music Generation:

- Jukebox: OpenAI’s music generation model that can create music in various styles and genres.

- Amper Music: A tool for composing and producing original music through AI assistance.

- Mubert: A platform for generating ambient and background music for different purposes.

4. Other Tools:

- Murf.ai: Generates high-quality voice-overs for videos and presentations.

- Synthesia: Creates realistic AI avatars that can be used in videos and other media.

- Codex: A tool from OpenAI that helps developers write and improve code by suggesting completions and fixing errors.

These are just a few examples, and the landscape of generative AI tools is constantly evolving. As technology advances, we can expect to see even more innovative and specialized tools emerge.

AWS Services for Generative AI

AWS provides a comprehensive suite of services to support the development and deployment of generative AI models. Here are some key services:

- Amazon SageMaker: SageMaker simplifies the process of building, training, and deploying machine learning models. It supports various frameworks like TensorFlow, PyTorch, and MXNet, making it a versatile choice for generative AI projects.

- AWS Deep Learning AMIs: These AMIs provide pre-installed deep learning frameworks, enabling researchers and developers to quickly set up their environments and start training generative models.

- Amazon EC2 P3 Instances: Powered by NVIDIA V100 GPUs, P3 instances provide the computational power needed to train complex generative models efficiently.

- AWS Lambda: Lambda allows you to run code without provisioning or managing servers, which is useful for deploying lightweight generative AI applications and APIs.

- Amazon Polly: Polly is a text-to-speech service that leverages generative AI to convert text into natural-sounding speech, enabling voice applications and accessibility features.

Learn more about Amazon SageMaker

Building a Generative AI Model on AWS

Let’s walk through a high-level overview of building a generative AI model on AWS:

- Data Preparation: Start by collecting and preparing your dataset. AWS Glue can help with data extraction, transformation, and loading (ETL) tasks.

- Model Development: Use Amazon SageMaker to develop your generative model. SageMaker Studio provides an integrated development environment (IDE) for building and debugging your models.

- Training: Leverage SageMaker’s managed training capabilities to train your model at scale. Use Spot Instances to optimize costs.

- Deployment: Deploy your trained model using SageMaker’s hosting services. You can create endpoints for real-time inference or batch transform jobs for large-scale predictions.

- Monitoring: Utilize Amazon CloudWatch and SageMaker Model Monitor to keep track of your model’s performance and ensure it operates reliably in production.

Learn more about AWS Deep Learning

Azure Services for Generative AI

Azure provides a rich ecosystem of services to support the development and deployment of generative AI models. Here are some key services:

- Azure Machine Learning: Azure Machine Learning offers a comprehensive platform to build, train, and deploy machine learning models. It supports various frameworks like TensorFlow, PyTorch, and Scikit-learn, making it a versatile choice for generative AI projects.

- Azure Databricks: This Apache Spark-based analytics platform is optimized for Azure. It simplifies big data and AI workflows, making it easier to develop and train generative models at scale.

- Azure Cognitive Services: These pre-built AI services enable developers to add generative AI capabilities such as language understanding, image recognition, and text-to-speech to their applications.

- Azure Kubernetes Service (AKS): AKS provides a managed Kubernetes environment for deploying containerized generative AI models, ensuring scalability and reliability.

- Azure Batch: This service allows you to run large-scale parallel and high-performance computing (HPC) batch jobs, which is useful for training complex generative models.

Learn more about Azure Machine Learning

Building a Generative AI Model on Azure

Let’s walk through a high-level overview of building a generative AI model on Azure:

- Data Preparation: Start by collecting and preparing your dataset. Use Azure Data Factory to orchestrate and automate data movement and transformation.

- Model Development: Use Azure Machine Learning to develop your generative model. Azure ML Studio provides an integrated development environment (IDE) for building and debugging your models.

- Training: Leverage Azure Machine Learning’s managed training capabilities to train your model at scale. Use Spot VMs to optimize costs.

- Deployment: Deploy your trained model using Azure Kubernetes Service or Azure Container Instances for real-time inference. You can also use Azure Machine Learning endpoints for batch scoring.

- Monitoring: Utilize Azure Monitor and Application Insights to keep track of your model’s performance and ensure it operates reliably in production.

Conclusion

Generative AI is revolutionizing how we create and interact with digital content. By leveraging neural networks to identify patterns in data, generative AI models can produce new, original content across various modalities, including text, images, audio, and more. This technology offers immense benefits, such as enhancing creative processes, improving efficiency, and enabling sophisticated data analysis.

Despite its promise, generative AI faces significant challenges, such as the need for vast computational resources, high-quality data, and efficient data pipelines. However, ongoing advancements and the support of leading tech companies are helping to address these obstacles.

As generative AI continues to evolve, its applications will expand, driving innovation across industries like healthcare, entertainment, automotive, and more. By overcoming current limitations, generative AI will unlock new possibilities, transforming our approach to content creation and problem-solving in the digital age.

Related/References

- Visit our YouTube channel “K21Academy”

- Join Our Generative AI Whatsapp Community

- What is Prompt Engineering?

- 𝐖𝐡𝐚𝐭 𝐢𝐬 𝐚 𝐥𝐚𝐫𝐠𝐞 𝐥𝐚𝐧𝐠𝐮𝐚𝐠𝐞 𝐦𝐨𝐝𝐞𝐥 (𝐋𝐋𝐌)?

- 𝐖𝐡𝐚𝐭 𝐈𝐬 𝐍𝐋𝐏 (𝐍𝐚𝐭𝐮𝐫𝐚𝐥 𝐋𝐚𝐧𝐠𝐮𝐚𝐠𝐞 𝐏𝐫𝐨𝐜𝐞𝐬𝐬𝐢𝐧𝐠)?

- Generative AI for Kubernetes: K8sGPT