In the rapidly evolving landscape of artificial intelligence, large language models (LLMs) like GPT, Bard, and PaLM are transforming how we interact with technology. LangChain (LC) is an open-source framework that simplifies building applications powered by large language models (LLMs). It provides modular components and APIs to streamline development, making it easier to create sophisticated, context-aware AI applications.

This blog-post explains about what is LangChain & How it works?

We will explore:

- What is LangChain?

- Benefits of LangChain

- How LangChain works?

- Applications of LangChain

- Core components of LangChain

- LangChain Requirements With AWS

- Conclusion

- FAQs

What is LangChain?

It is an open-source framework that helps developers build applications using large language models (LLMs). It is available as both Python and JavaScript libraries and provides tools and APIs to make creating LLM-based applications, such as chatbots and virtual assistants, easier.

IT acts as a universal interface for various LLMs, offering a centralized environment for developing these applications and integrating them with external data sources and workflows. Its modular design allows developers to easily compare different prompts and models without rewriting code. This flexibility also enables the use of multiple LLMs in a single application, such as one model to interpret user queries and another to generate responses.

Created by Harrison Chase in October 2022, LC rapidly gained popularity. By June 2023, it had become the fastest-growing open-source project on GitHub. The launch of OpenAI’s ChatGPT shortly after LC’s release contributed to its widespread adoption, making generative AI more accessible.

LC supports various use cases for LLMs and natural language processing (NLP), including chatbots, intelligent search, question-answering, summarization services, and virtual agents capable of automating tasks.

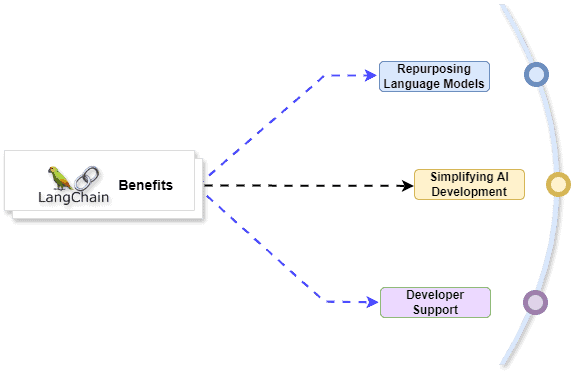

Benefits of LangChain

Repurposing Language Models:

LC allows organizations to repurpose LLMs for specific applications without needing to retrain or fine-tune them. This enables development teams to create complex applications that reference proprietary information to enhance model responses. For instance, it can be used to build applications that read data from internal documents and summarize it into conversational responses. It supports Retrieval Augmented Generation (RAG) workflows, which introduce new information to the model during prompting, reducing hallucinations and improving accuracy.

Simplifying AI Development:

It abstracts the complexity of integrating data sources and refining prompts, making AI development simpler. Developers can customize sequences to build complex applications quickly. Instead of writing extensive business logic, software teams can use templates and libraries provided by LC to reduce development time.

Developer Support:

LC offers tools to connect LLMs with external data sources and is supported by an active open-source community. Organizations can use LC for free and benefit from community support, making it a cost-effective and well-supported choice for AI developers.

How LangChain works?

Imagine LC as a recipe book for building AI applications with large language models (LLMs). LLMs are powerful tools that can answer questions and create text, but they need guidance to be truly useful.

LC breaks down building an AI application into smaller steps. These steps, called links, can be anything from getting user input to translating languages or asking the LLM a question. Developers can then chain these links together to create a recipe, or chain, for their specific application.

Here’s a simpler way to think about it:

- Ingredients (Data): This could be user input, information from the web, or anything your application needs to work.

- Tools (Links): These are the actions LC can perform, like translating languages, asking the LLM questions, or formatting text.

- Recipes (Chains): These are the workflows you create by chaining links together. They tell LC exactly what to do with the ingredients (data) to get the desired outcome.

By using chains, developers can easily adapt LLMs to different tasks without having to retrain the entire model from scratch. This makes building and customizing AI applications faster and more efficient.

Applications of LangChain

LangChain empowers developers to create a wide range of AI applications by simplifying how they work with large language models (LLMs).

Here are some key applications of LangChain:

- Chatbots: Imagine chatbots that can understand context and have more natural conversations. LangChain can help build these by guiding the LLM through the conversation flow.

- Q&A Systems: LangChain can create question-answering systems that tap into different data sources and use the LLM to provide insightful answers.

- Content Generation: Need help writing marketing copy or social media posts? LangChain can chain together prompts and instructions to get the LLM to generate creative content.

- Summarization Services: LangChain can be used to build tools that take lengthy articles or documents and use the LLM to create concise summaries.

- Context-Aware Workflows: LangChain allows developers to design workflows that adapt to the situation. This can be useful for tasks like customer service or technical support.

Overall, LangChain removes the complexity of working with LLMs, making it easier for developers to build and deploy powerful AI applications.

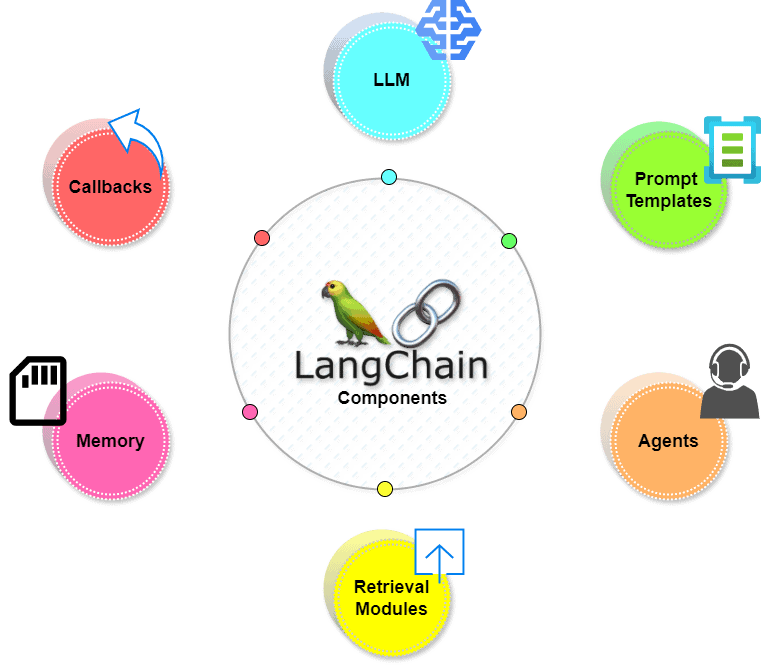

Core Components of LangChain

LangChain provides several core components that enable software teams to build context-aware language model systems effectively. These components are designed to streamline development and enhance the functionality of LLM-based applications.

Here are the key components:

1. LLM Interface:

LangChain offers APIs that allow developers to connect and query various LLMs, such as GPT, Bard, and PaLM. These APIs simplify the process of interfacing with both public and proprietary models, enabling developers to make straightforward API calls instead of writing complex code.

2. Prompt Templates:

Prompt templates are pre-built structures that help developers consistently format queries for AI models. They can be used for various applications, including chatbots, few-shot learning, or providing specific instructions to language models. These templates are reusable across different applications and models, ensuring consistency and efficiency.

3. Agents:

Agents are specialized chains that prompt the language model to determine the best sequence of actions in response to a query. Developers can use LangChain’s tools and libraries to compose and customize these chains for complex applications. By providing the user’s input, available tools, and possible intermediate steps, the language model can return a viable sequence of actions for the application.

4. Retrieval Modules:

LangChain supports the creation of Retrieval Augmented Generation (RAG) systems with tools to transform, store, search, and retrieve information. Developers can create semantic representations of information using word embeddings and store them in local or cloud vector databases, which refine language model responses by providing relevant context.

5. Memory:

LangChain allows developers to incorporate memory capabilities into their systems, enhancing conversational language model applications by recalling information from past interactions. This includes:

- Simple Memory Systems: Recall the most recent conversations.

- Complex Memory Structures: Analyze historical messages to return the most relevant results.

6. Callbacks:

Callbacks are codes that developers use to log, monitor, and stream specific events during LangChain operations. They can track when a chain was first called, log errors encountered, and provide insights into the application’s performance and behavior.

LangChain Requirements With AWS

AWS offers a robust infrastructure to support LangChain requirements for building and deploying generative AI applications on enterprise data. Here’s how each component fits into the architecture:

Amazon Bedrock

- Purpose: Managed service for building and deploying generative AI applications.

- Role in LangChain: Provides generative models that can be accessed and utilized within LangChain.

Learn more about Amazon Bedrock

Amazon Kendra

- Purpose: ML-powered internal search service.

- Role in LangChain: Enhances language model outputs by refining data from proprietary databases and enabling effective internal searches.

Amazon SageMaker JumpStart

- Purpose: ML hub offering pre-built algorithms and foundational models.

- Role in LangChain: Hosts foundational models that can be quickly deployed and prompted from LangChain for various AI tasks.

Learn more about AWS SageMaker

Integration with LangChain

- Interface: LangChain acts as the connecting layer that integrates Amazon Bedrock, Amazon Kendra, and Amazon SageMaker JumpStart.

- Outcome: Facilitates the creation of highly-accurate generative AI applications using enterprise data and LLMs.

Conclusion

LangChain represents a significant advancement in the field of AI application development, providing developers with powerful tools to harness the capabilities of large language models like never before. By abstracting the complexities of integrating and leveraging LLMs such as GPT, Bard, and PaLM, LangChain enables both experts and newcomers to create sophisticated, context-aware applications with ease.

FAQs

What is LangChain?

LangChain is an open-source framework designed to simplify the development of applications using large language models (LLMs). It provides a suite of tools, APIs, and modular components that streamline the integration and utilization of LLMs such as GPT, Bard, and PaLM.

Who developed LangChain?

LangChain was developed by Harrison Chase and launched in October 2022. It has since become one of the fastest-growing open-source projects on GitHub, gaining popularity for its ability to democratize access to advanced AI technologies.

How does LangChain simplify AI development?

LangChain abstracts the complexities of working with LLMs, providing pre-built components like prompt templates, agents, retrieval modules, and memory capabilities. This abstraction reduces the need for deep technical expertise, making it easier for developers to create and deploy AI applications quickly.

How is LangChain different from other AI frameworks?

LangChain distinguishes itself by focusing specifically on simplifying the development of applications using LLMs. Its modular approach and emphasis on abstraction make it particularly suited for tasks that require natural language understanding and generation.

Is LangChain actively maintained and supported?

Yes, LangChain is an active open-source project with ongoing development and support from its community. Updates, bug fixes, and new features are regularly released, ensuring that developers have access to the latest advancements in AI technology.

Related/References

- Visit our YouTube channel “K21Academy”

- Join Our Generative AI Whatsapp Community

- What is Generative AI & How It Works?

- What is Prompt Engineering?

- 𝐖𝐡𝐚𝐭 𝐢𝐬 𝐚 𝐥𝐚𝐫𝐠𝐞 𝐥𝐚𝐧𝐠𝐮𝐚𝐠𝐞 𝐦𝐨𝐝𝐞𝐥 (𝐋𝐋𝐌)?

- 𝐖𝐡𝐚𝐭 𝐈𝐬 𝐍𝐋𝐏 (𝐍𝐚𝐭𝐮𝐫𝐚𝐥 𝐋𝐚𝐧𝐠𝐮𝐚𝐠𝐞 𝐏𝐫𝐨𝐜𝐞𝐬𝐬𝐢𝐧𝐠)?

- Generative AI for Kubernetes: K8sGPT

- Amazon Bedrock: Redefining Generative AI Development

- Amazon AWS SageMaker For Machine Learning: Overview & Capabilities

Join FREE Masterclass

In our AWS AI/ML training we cover all exam objectives, hands-on labs, and practice tests. Whether you’re aiming to become a AWS Certified: AWS Certified AI Practitioner, AWS Certified ML Engineer & AWS Certified Machine Learning Specialty, join our waitlist by clicking here